Most GPUs use between 75 and 450 watts, depending on the model and workload. Entry-level GPUs draw about 75 to 150 W, mid-range GPUs draw about 150 to 250 W, and high-end GPUs draw 300 to 450 W during gaming or other heavy tasks.

This guide explains how much power GPUs consume, why graphics cards use so much electricity, and the factors that affect their power consumption.

What Is GPU Power Consumption?

GPU power consumption refers to the amount of electricity a graphics card uses while performing tasks. Your GPU receives electrical power from the computer’s power supply unit (PSU) and converts that energy into processing performance for graphics, gaming, video rendering, and AI workloads.

Power usage is measured in watts (W). One watt represents one joule of energy used per second.

However, a GPU does not consume the same amount of power at all times. Its consumption constantly changes depending on workload and system conditions.

For example:

- A modern GPU may use 15 to 30 watts while idle

- The same GPU can consume 200 to 400 watts during gaming

Understanding GPU power consumption helps users choose the correct power supply, manage electricity costs, and control system temperatures.

What Does GPU Power Consumption Mean?

GPU power consumption describes how much electrical energy the graphics card needs to perform calculations and render graphics.

A GPU processes millions or even billions of operations every second. These tasks include:

- shading calculations

- lighting effects

- geometry processing

- physics simulations

Every one of these operations requires electrical power.

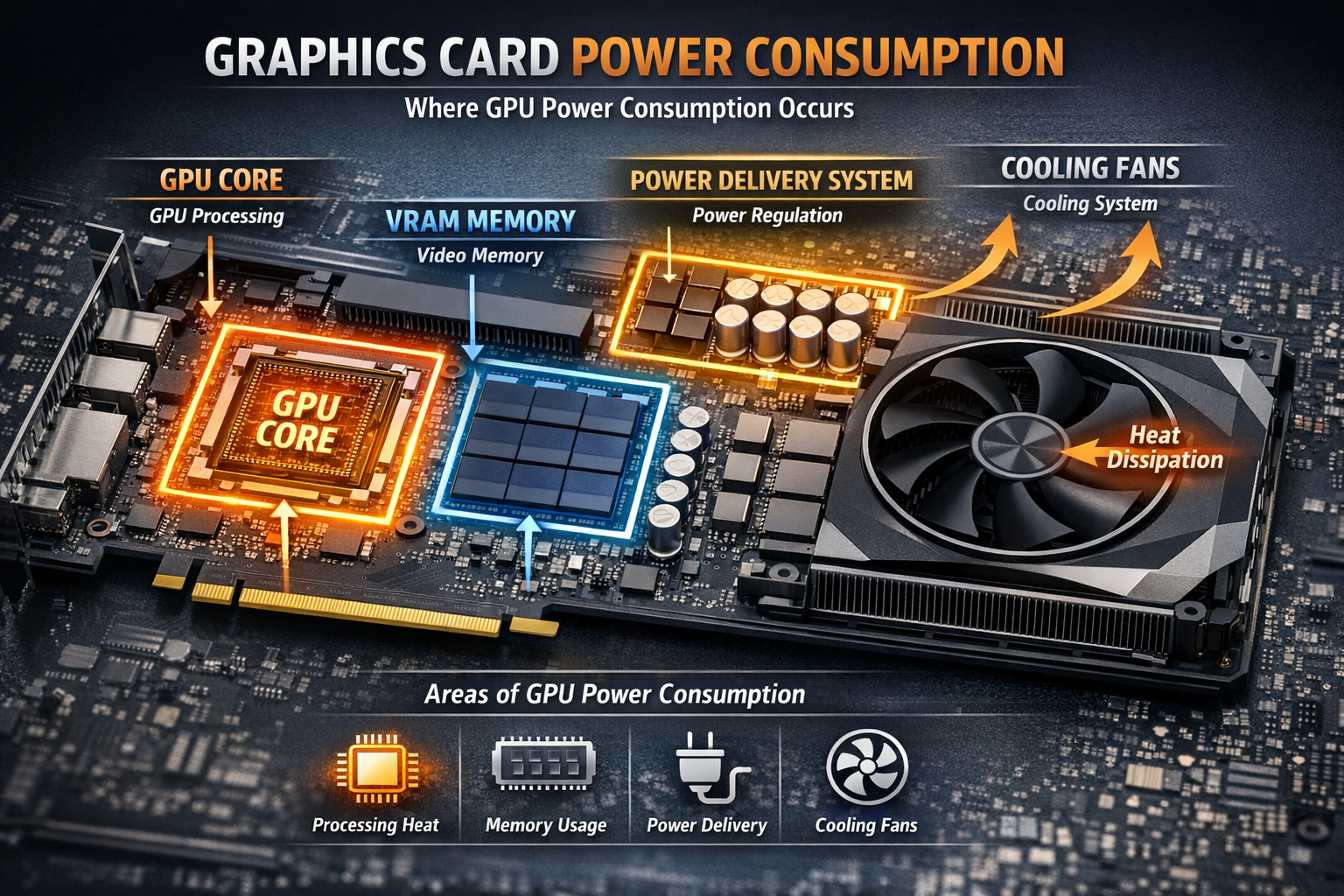

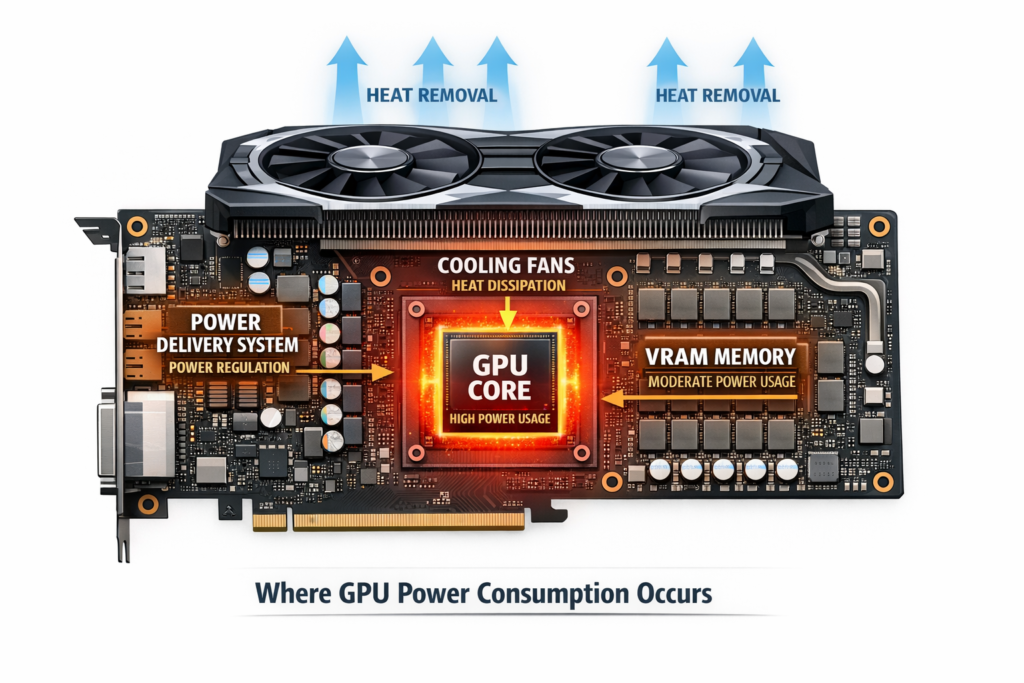

Several components inside the graphics card consume energy:

GPU Core Power

The main processor that performs graphics calculations.

VRAM Power

Graphics memory is used to store textures, models, and rendering data.

Board Power

Additional power is used by components such as voltage regulators and cooling fans.

Manufacturers often publish a specification called Total Board Power (TBP). This value represents the estimated maximum power consumption of the entire graphics card under heavy workloads.

For instance, if a GPU has a 250-watt board power rating, it may approach that level during demanding tasks like modern gaming or rendering.

Why Do Graphics Cards Use So Much Power

Modern graphics cards require significant electrical power because they perform extremely complex calculations at very high speeds.

Several design factors increase GPU power usage.

Large numbers of processing cores

High-performance GPUs contain thousands of parallel processing cores. Each core performs calculations every clock cycle.

High clock speeds

Modern GPUs often boost above 2 GHz, which significantly increases energy consumption.

High-speed memory

Advanced GPUs use memory technologies like GDDR6 and GDDR6X, which operate at extremely high data rates.

Advanced graphics technologies

Features such as ray tracing, AI upscaling, and complex lighting systems increase GPU workload and power demand.

For example, when a GPU renders a complex 4K gaming scene, it may process billions of calculations every second. This level of computation naturally increases electricity usage.

This is one reason why modern high-end GPUs can reach 350 to 450 watts under heavy load.

How GPU Architecture Affects Power Usage?

GPU architecture plays a major role in performance and power efficiency.

Architecture refers to how engineers design the internal structure of the GPU chip. It includes:

- transistor layout

- cache design

- instruction pipelines

- manufacturing process

Newer GPU architectures usually improve performance per watt, meaning they deliver more performance while using similar or lower power.

The semiconductor manufacturing process also affects efficiency.

For example:

- Older GPUs were built using 14-nanometer processes

- Many modern GPUs use 5-nm or 4-nm technology

Smaller transistors reduce electrical resistance and heat generation, improving energy efficiency.

Modern GPUs also include larger cache memory, which reduces how often data must travel to VRAM. This helps lower power consumption.

What Is GPU TDP and Why Does It Matter?

GPU TDP (Thermal Design Power) represents the amount of heat the cooling system must dissipate during heavy workloads.

Manufacturers use TDP as a guideline for designing:

- cooling systems

- power delivery

- Recommended power supplies

For example, if a GPU has a 300-watt TDP, the cooling system must be able to remove roughly 300 watts of heat under sustained load.

TDP helps users understand three key things:

Cooling requirements

Higher TDP GPUs need stronger cooling solutions.

Power supply needs

Higher TDP increases overall system power demand.

Case airflow requirements

Powerful GPUs release more heat inside the PC case.

However, TDP should be treated as an estimate, not an exact measurement.

TDP vs Actual GPU Power Consumption:

Many users assume TDP equals exact power usage, but real-world power draw often varies.

TDP represents expected heat output under typical heavy workloads, while actual GPU power consumption changes constantly based on real-time activity.

For example:

- A 300-watt GPU may use 200 to 250 watts in many games

- In very demanding scenes, it may approach 300 watts

Modern GPUs dynamically adjust clock speeds and power levels depending on temperature, workload, and power limits.

Monitoring software often shows power usage fluctuating during gameplay.

For a deeper technical explanation of GPU power ratings and thermal design limits, you can also review the official documentation from NVIDIA, which explains how GPU power and thermal limits are defined.

How Many Watts Does a GPU Use on Average?

Average GPU power consumption depends heavily on performance level.

| GPU Category | Typical Power Usage |

| Entry-level GPUs | 75 to 120 watts |

| Mid-range GPUs | 150 to 250 watts |

| High-end GPUs | 300 to 450 watts |

Some well-known examples include:

| GPU Model | Approximate Power |

| RTX 3060 | 170W |

| RTX 4070 | 200W |

| RTX 4090 | 450W |

Actual usage depends on factors like game settings, resolution, and workload intensity.

Power Usage of Entry-Level GPUs:

Entry-level graphics cards focus on efficiency and affordability. These GPUs are typically used for light gaming, media playback, and everyday computing tasks.

Most entry-level GPUs consume 60 to 120 watts.

Examples include:

- GTX 1650 about 75 watts

- RX 6400 about 60 watts

Many of these GPUs do not require additional power connectors. Instead, they draw power directly from the PCIe slot, which can provide up to 75 watts.

This design keeps systems simple and energy efficient.

Power Consumption of Mid-Range GPUs:

Mid-range GPUs offer strong gaming performance while maintaining moderate power consumption.

Most cards in this category use 150 to 250 watts during gaming workloads.

Examples include:

- RTX 3060 about 170 watts

- RTX 4060 about 115 watts

- RX 7600 about 165 watts

These GPUs typically require one or two 8-pin PCIe power connectors.

They also support advanced graphics features such as ray tracing and AI-based upscaling, which can increase workload intensity.

How Many Watts Do High-End GPUs Use?

High-end graphics cards focus on delivering maximum performance for demanding tasks such as 4K gaming, 3D rendering, and professional workloads.

Power consumption can reach 300 to 450 watts.

Examples include:

- RTX 4080 about 320 watts

- RTX 4090 about 450 watts

- RX 7900 XTX about 355 watts

These GPUs require large cooling systems and powerful power supplies.

Manufacturers often recommend 750 to 1000-watt PSUs for systems using these cards.

Power Requirements for Workstation and AI GPUs:

Professional GPUs used for engineering, scientific computing, and AI workloads often consume even more power than gaming cards.

Typical ranges include:

- 250 to 400 watts for professional workstation GPUs

- 400 to 700 watts for advanced AI accelerators

Some data-center GPUs prioritize maximum performance and can operate at very high power levels.

These systems require specialized cooling and high-capacity power delivery.

How Much Power Do Laptop GPUs Use?

Laptop GPUs operate under strict thermal and power limits because portable systems must control heat and battery usage.

Most laptop GPUs consume between 35 and 175 watts.

Typical ranges include:

- Entry-level laptop GPUs: 35 to 60 watts

- Mid-range laptop GPUs: 80 to120 watts

- High-performance laptop GPUs: 140 to 175 watts

Even when a laptop GPU has the same model name as a desktop version, it usually runs at lower power limits and lower clock speeds.

What Factors Affect GPU Power Consumption?

Several factors determine how much electricity a GPU uses during operation.

Important influences include:

- Game graphics settings: Higher settings increase workload

- Screen resolution: Rendering more pixels requires more power

- GPU clock speed: Higher frequencies increase energy use

- Ray tracing effects: Real-time lighting calculations are demanding

- Driver optimization: Updates can improve efficiency

- Cooling performance: Better cooling allows higher boost clocks

All of these elements combine to determine the GPU’s final power usage.

GPU Power Usage During Gaming vs Idle:

When a system is idle, the GPU performs very few tasks.

Typical idle power consumption for modern GPUs is 10 to 30 W.

During gaming, thousands of GPU cores activate to maintain frame rate and render complex scenes.

Gaming power consumption typically ranges from 150 to 450 W, depending on the GPU model.

For example:

An RTX 3060 may use around 15 watts while idle, but during gaming, it can reach approximately 170 watts.

GPU power usage often increases with a higher workload. If you are wondering what normal GPU load looks like, read How Much GPU Usage Is Normal.

How Resolution and Graphics Settings Affect GPU Power?

Resolution significantly affects GPU workload.

Higher resolutions require the GPU to render more pixels every frame.

Pixel counts by resolution:

- 1080p has about 2 million pixels

- 1440p about 3.7 million pixels

- 4K about 8.3 million pixels

Rendering more pixels increases both GPU workload and power usage.

Graphics settings also play a major role.

Features that increase GPU power demand include:

- ray tracing

- high texture quality

- complex shadows

- volumetric lighting

Lowering these settings can significantly reduce GPU watt usage and system heat.

Does Overclocking Increase GPU Power Usage?

Yes, Overclocking increases GPU clock speeds beyond factory settings, which requires additional voltage for stability.

Higher voltage and higher frequency both increase electrical power consumption.

For example:

A GPU that normally uses 200 watts may consume 230 to 250 watts after overclocking.

Overclocking also increases heat output, so a strong cooling system is necessary to maintain stability.

If you want to understand how overclocking boosts GPU performance and increases power draw, read our detailed guide on What Does Overclocking a GPU Do.

How GPU Cooling Systems Affect Power Efficiency?

Cooling systems influence how efficiently a GPU operates.

When temperatures remain low, the GPU can maintain stable clock speeds without needing extra voltage.

Effective cooling solutions include:

- large heatsinks

- multiple fans

- heat pipes

- vapor chambers

Lower temperatures improve electrical efficiency and help prevent thermal throttling, which reduces performance when the GPU becomes too hot.

Higher power usage also increases heat. If you are unsure whether your temperatures are safe, check our guide Is 85 Celsius Hot For GPU.

How Power Supply Efficiency Impacts GPU Power?

Power supply efficiency determines how much electricity your system draws from the wall outlet.

High-efficiency power supplies waste less energy as heat.

For example, an 80 Plus Gold PSU can reach around 90% efficiency.

If your PC components require 400 watts internally, the PSU may draw roughly 440 watts from the wall.

Using a high-efficiency PSU reduces wasted energy and lowers electricity costs.

How to Check How Many Watts Your GPU Uses?

You can monitor GPU power usage using hardware monitoring software that reads sensors built into the graphics card.

Popular tools include:

- MSI Afterburner

- GPU-Z

- HWInfo

These programs display real-time information such as:

- GPU power usage

- clock speeds

- temperature

- fan speed

Running these tools during gaming allows you to see how power consumption changes in real time.

How to Reduce GPU Power Consumption?

Reducing GPU power usage can lower system temperatures and electricity costs.

Some practical methods include:

- limiting frame rates in games

- lowering graphics settings

- enabling power-saving driver modes

- Reducing screen resolution

- improving case airflow

These adjustments can reduce GPU power usage while maintaining good performance.

FAQ’s:

Does a GPU Use More Power Than a CPU?

Yes, A modern gaming GPU often uses 150 to 450 watts during heavy tasks, while most CPUs use about 65 to 150 watts under load.

Is 300 Watts High for a GPU?

Yes, A 300 watt GPU sits in the high-performance category and usually appears in powerful gaming or workstation graphics cards.

Do GPUs Use Power When Idle?

Yes, A GPU still uses around 10 to 30 watts when your computer sits idle because it keeps basic display functions active.

How Many Watts Does a Gaming GPU Use?

Most gaming GPUs use between 150 and 350 watts during gameplay, depending on the model, game settings, and screen resolution.

What Power Supply Do You Need for a GPU?

You should choose a PSU based on total system power. Most mid-range GPUs need a 550 to 650-watt power supply, while high-end GPUs often require 750 watts or more.

Conclusion:

GPU power consumption depends on the graphics card model, workload intensity, and graphics settings. Most modern GPUs use between 75 and 450 watts during demanding tasks like gaming, rendering, or AI workloads.

Understanding how many watts a GPU uses helps you choose the right power supply, manage electricity usage, and maintain a stable PC system.

Monitoring power usage and adjusting settings when necessary can also improve efficiency, temperature control, and overall system performance.